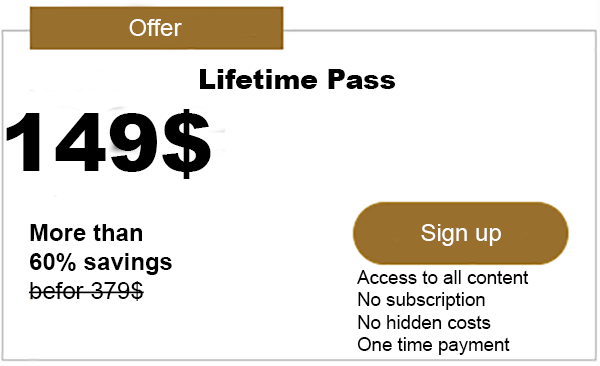

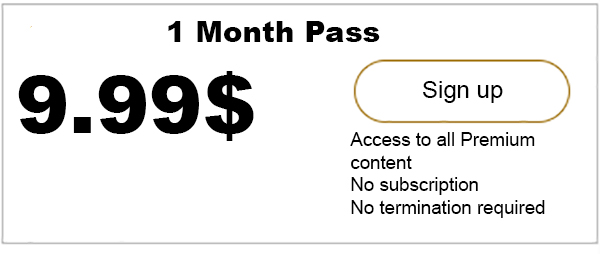

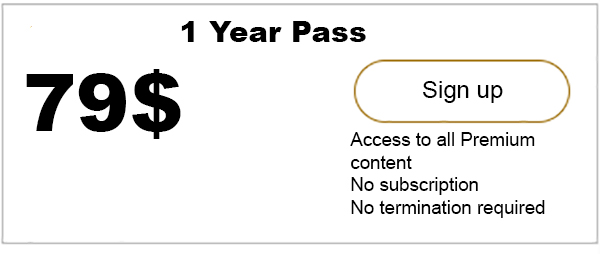

Advertisment

Rate control in distributed ledgers In most networks, there are circumstances where the incoming traffic load is larger than what the network can handle. If nothing is done to restrict the entrance of traffic, bottlenecks can slow down the entire network. Additionally, the buffer space at some nodes may be exhausted, leading to discarding some…

iota-news.com is author of this content, TheBitcoinNews.com is is not responsible for the content of external sites.

Our Social Networks: Facebook Instagram Pinterest Reddit Telegram Twitter Youtube